More reason to outlaw Impact Factors from personnel discussions

June 14th, 2012

Pity the poor, beleaguered “Impact Factor™” (IF), a secret mathematistic formula originally intended to serve as a proxy for journal quality. No one seems to like it much. The manifold problems with IF have been rehearsed to death:

- The calculation isn’t a proper average.

- The calculation is statistically inappropriate.

- The calculation ignores most of the citation data.

- The calculated values aren’t reproducible.

- Citation rates, and hence Impact Factors, vary considerably across fields making cross-discipline comparison meaningless.

- Citation rates vary across languages. Ditto.

- IF varies over time, and varies differentially for different types of journals.

- IF is manipulable by publishers.

The study by the International Mathematical Union is especially trenchant on these matters, as is Bjorn Brembs’ take. I don’t want to pile on, just look at some new data that shows that IF has been getting even more problematic over time.

One of the most egregious uses of IF is in promotion and tenure discussions. It’s been understood for a long time that the Impact Factor, given its manifest problems as a method for ranking journals, is completely inappropriate for ranking articles. As the European Association of Science Editors has said

Therefore the European Association of Science Editors recommends that journal impact factors are used only – and cautiously – for measuring and comparing the influence of entire journals, but not for the assessment of single papers, and certainly not for the assessment of researchers or research programmes either directly or as a surrogate.

Even Thomson Reuters says

The impact factor should be used with informed peer review. In the case of academic evaluation for tenure it is sometimes inappropriate to use the impact of the source journal to estimate the expected frequency of a recently published article.

“Sometimes inappropriate.” Snort.

|

| …the money chart… |

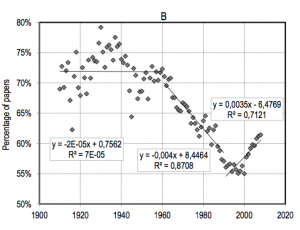

Check out the money chart from the recent paper “The weakening relationship between the Impact Factor and papers’ citations in the digital age” by George A. Lozano, Vincent Lariviere, and Yves Gingras.

They address the issue of whether the most highly cited papers tend to appear in the highest Impact Factor journals, and how that has changed over time. One of their analyses looked at the papers that fall in the top 5% for number of citations over a two-year period following publication, and depicts what percentage of these do not appear in the top 5% of journals as ranked by Impact Factor. If Impact Factor were a perfect reflection of the future citation rate of the articles in the journal, this number should be zero.

As it turns out, the percentage has been extremely high over the years. The majority of top papers fall into this group, indicating that restricting attention to top Impact Factor journals doesn’t nearly cover the best papers. This by itself is not too surprising, though it doesn’t bode well for IF.

More interesting is the trajectory of the numbers. At one point, roughly up through World War II, the numbers were in the 70s and 80s. Three quarters of the top-cited papers were not in the top IF journals. After the war, a steady consolidation of journal brands, along with the invention of the formal Impact Factor in the 60s and its increased use, led to a steady decline in the percentage of top articles in non-top journals. Basically, a journal’s imprimatur — and its IF along with it — became a better and better indicator of the quality of the articles it published. (Better, but still not particularly good.)

This process ended around 1990. As electronic distribution of individual articles took over for distribution of articles bundled within printed journal issues, it became less important which journal an article appeared in. Articles more and more lived and died by their own inherent quality rather than by the quality signal inherited from their publishing journal. The pattern in the graph is striking.

The important ramification is that the Impact Factor of a journal is an increasingly poor metric of quality, especially at the top end. And it is likely to get even worse. Electronic distribution of individual articles is only increasing, and as the Impact Factor signal decreases, there is less motivation to publish the best work in high IF journals, compounding the change.

Meanwhile, computer and network technology has brought us to the point where we can develop and use metrics that serve as proxies for quality at the individual article level. We don’t need to rely on journal-level metrics to evaluate articles.

Given all this, promotion and tenure committees should proscribe consideration of journal-level metrics — including Impact Factor — in their deliberations. Instead, if they must use metrics, they should use article-level metrics only, or better yet, read the articles themselves.

June 17th, 2012 at 7:40 am

Absolutely, I wrote in this topic in 2006, as impact does not accurately reflect overall influence and primacy in the field, the point of having impact factors tied to tenure. Overall, web published journals tend to have more article “connections” than those in print form.

http://firstmonday.org/htbin/cgiwrap/bin/ojs/index.php/fm/article/viewArticle/1340

August 18th, 2012 at 2:38 pm

This issue must be rethought because in developing countries journals proliferate with almost no evaluation of articles. In this sense, the impact factor is important.